For years, the promise of true self-driving has felt like a mirage—always on the horizon, shimmering with potential, yet frustratingly out of reach. The narrative oscillated between breathless predictions of imminent robotaxis and sobering reality checks after every edge-case incident. But for those of us who watch the industry’s pulse not through press releases, but through sensor specifications, regulatory filings, and the quiet expansion of operational design domains (ODDs), a different story is unfolding. We are now witnessing the pre-scale landing of Level 4 autonomy. This isn’t a sudden, flashy consumer launch; it’s a deliberate, city-by-city, technology-and-infrastructure handshake that is fundamentally altering the DNA of mobility.

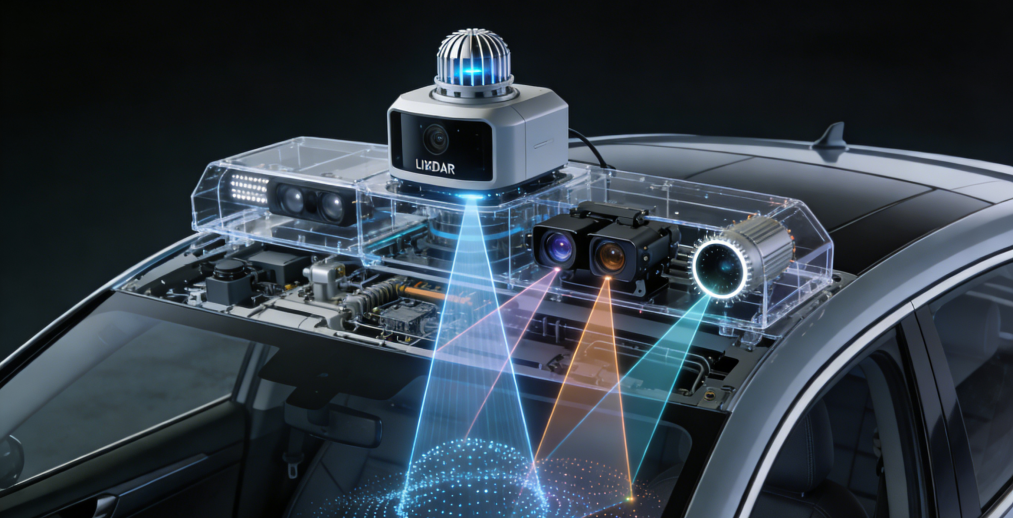

Let’s be clear: L4, as defined by SAE, means the vehicle handles all driving tasks within a specific geographic and environmental ODD without human intervention. The breakthrough isn’t a single “aha!” moment, but a confluence of hard-won advances. First, the sensor suite has matured from a kludge of components into a cohesive perceptual organ. Next-gen solid-state LiDARs are achieving higher resolution with automotive-grade reliability and lower cost, while 4D imaging radars are learning to “see” velocity and elevation with unprecedented clarity, cutting through fog and rain. Cameras, powered by neural networks trained on billions of real-world miles, are getting better at semantic understanding—not just seeing a shape, but comprehending that it’s a cyclist about to swerve or a construction worker’s subtle gesture.

More critically, the AI driver’s brain has evolved. The industry is moving beyond pure end-to-end neural networks and monolithic algorithms. The new paradigm is hybrid AI architecture, marrying deep learning’s pattern recognition with deterministic, rules-based safety models and sophisticated predictive behavior models. This system doesn’t just react; it anticipates. It runs thousands of probabilistic simulations per second to predict the paths of every road user, creating a buffer of safety and fluidity. “The vehicle’s planning stack now operates less like a simple controller and more like a grandmaster chess player, thinking multiple moves ahead within a complex, dynamic system,” observes a lead autonomy engineer at a firm currently deploying in multiple cities.

This technical leap is only one half of the equation. The true story of “pre-scale” is the bidirectional dance with the city itself. This isn’t about dropping a perfect AI driver into our chaotic, analog world and hoping it adapts. It’s about a collaborative redesign.

Progressive cities are becoming active partners. They are deploying digital infrastructure that acts as a sixth sense for AVs. Smart intersections with V2X (vehicle-to-everything) communication broadcast real-time signal phase and timing (SPaT) data, allowing the AV to optimize its approach, reducing harsh braking and improving traffic flow. High-definition, dynamic maps, continuously updated via fleet data, provide a centimeter-accurate, layer-aware view of the world, including temporary construction zones or special event closures. In essence, the city is starting to speak the AV’s language.

The impact on the urban fabric is profound. Early deployment zones, often for robotaxi services in defined districts, are serving as living labs. We’re seeing the emergence of “Mobility Cells.” Traffic patterns are smoothing as AVs drive with consistent, defensive predictability, reducing the stop-and-go waves caused by human reaction times. This isn’t just theory; data from pilot corridors show measurable reductions in congestion metrics during operational hours.

For the enthusiast, this changes everything we thought about performance. The metric shifts from 0-60 mph times to efficiency and systemic optimization. An L4 vehicle’s “performance” is its ability to merge seamlessly into a complex traffic stream, to execute a perfectly smooth unprotected left turn across oncoming traffic, or to coordinate with other AVs at a four-way stop to clear the intersection faster than humans ever could. It’s a new kind of elegance.

The road ahead is mapped, but not without curves. Scaling beyond sun-drenched, geofenced districts means conquering the “long tail” of rare events—the plastic bag blowing across the road that looks just like a pedestrian from one sensor angle, the unprecedented detour. Regulatory harmonization remains a patchwork. And the ethical calibration of driving behavior in no-win scenarios requires ongoing, transparent public dialogue.

What we’re witnessing is not the arrival of a finished product, but the maturation of a new mobility ecosystem. The car is no longer an isolated vehicle; it’s a node in a networked urban system. The romance of driving isn’t dying; it’s evolving. For the true vehicle enthusiast, there’s a new kind of beauty to appreciate—not just in the roar of an engine, but in the silent, intricate ballet of a fleet of AVs flowing through a smart city, a testament to a decade of relentless engineering now finally finding its stage. The future of driving is arriving. It’s just doing so one intelligent intersection at a time.

Discuss