We stand at a confluence of scientific revolutions. Artificial intelligence systems exhibit emergent capabilities, gene editing tools offer the power to rewrite the code of life, and neurotechnologies promise to interface directly with consciousness. The velocity of these advances, however, has far outstripped the societal frameworks designed to guide them. The central challenge of our technological epoch is no longer solely “can we do it?” but profoundly “should we, and how?” This has ignited a global, multi-stakeholder reckoning over the ethical boundaries and governance models for technologies that are fundamentally recursive—they change the very societies that must judge them.

The regulatory challenge is unprecedented in its complexity. Unlike past industrial technologies, frontier innovations like AI and CRISPR are characterized by their dual-use plasticity, profound opacity, and potential for irreversible consequences. A large language model can democratize education or generate disinformation at scale with equal ease. A gene drive engineered to eliminate malaria-carrying mosquitoes could alter entire ecosystems. Traditional, siloed regulatory bodies—designed for pharmaceuticals, financial markets, or industrial equipment—are ill-equipped to assess systems whose risks are systemic, non-linear, and deeply entangled with human values.

The core tension lies in the clash of temporalities. The pace of technological iteration follows an exponential, competitive logic, driven by market forces and open-source collaboration. The pace of ethical consensus-building and regulatory adaptation, in contrast, is deliberative, incremental, and rooted in democratic and legal processes. This mismatch creates a dangerous governance gap where capabilities can be deployed long before their societal implications are fully understood or rules are established.

This gap manifests in three critical arenas:

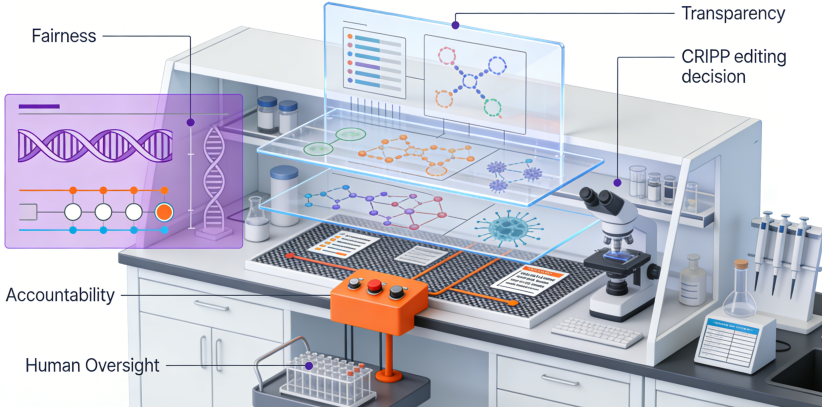

1. The Autonomy and Opacity of AI. As AI systems grow more complex, their decision-making processes become less interpretable, even to their creators. This “black box” problem raises acute issues of accountability. If an autonomous medical diagnostic AI errs, or a hiring algorithm perpetuates bias, who is responsible? Current liability law struggles with this. Furthermore, the deployment of autonomous systems in warfare, law enforcement, and critical infrastructure forces a re-evaluation of human agency and control. The ethical imperative is moving from a principle of “human-in-the-loop” to a more robust “meaningful human control,” requiring technical architectures that ensure oversight and override capacity.

2. The Permanent Edit of Biology. Gene editing, particularly in human germline cells, presents a qualitative ethical leap. Somatic edits affect an individual, but heritable edits alter the human gene pool for all future generations, raising specters of unintended consequences and socio-economic divides in “genetic enhancement.” The 2018 case of He Jiankui, who created the first gene-edited babies, demonstrated the insufficiency of voluntary scientific moratoria. It underscored the need for binding international standards with verification mechanisms, a task complicated by differing cultural, religious, and national perspectives on human dignity and the boundaries of therapy versus enhancement.

3. The Commodification of Consciousness and Identity. Emerging neurotechnologies that can decode mental states or modulate brain function threaten the last frontier of privacy: our inner thoughts. The data harvested from brain-computer interfaces could enable manipulation at a depth previously unimaginable. Similarly, generative AI’s ability to create synthetic media (deepfakes) and hyper-personalized persuasive content erodes our shared perception of reality and individual autonomy. Protecting cognitive liberty—the right to self-determination over one’s own consciousness and mental data—is becoming an urgent legal and ethical frontier.

Navigating this terrain demands a new paradigm for technology governance, one that is adaptive, anticipatory, and inclusive. Static, top-down regulation will fail. Instead, a multi-layered approach is emerging:

- Embedded Ethics by Design: Moving ethics from an external review board to a core engineering requirement. This involves developing technical standards for algorithmic fairness, transparency (XAI), and value alignment, building ethical constraints directly into systems.

- Agile and Anticipatory Regulation: Regulatory “sandboxes” allow for real-world testing of innovations within a supervised, time-bound framework. Horizon-scanning and participatory technology assessment exercises can help societies anticipate and deliberate on impacts before technologies are fully baked.

- Global Coordination on Foundational Norms: While full harmonization is unlikely, establishing red lines—such as a global ban on autonomous lethal weapons or heritable human germline editing—is critical. Forums like the UN, OECD, and the Global Partnership on AI are essential for building consensus among nations with competing interests.

- Empowered Civic and Multidisciplinary Deliberation: Decisions of such magnitude cannot be left to technologists and regulators alone. Robust public engagement, drawing on philosophy, sociology, law, and the arts, is necessary to define the collective values that should guide technological trajectories.

The goal is not to stifle innovation but to steer it toward human flourishing. The great test of the 21st century will be whether we can develop the wisdom and institutional capacity to govern the unprecedented power we are unleashing. It is a race not between companies or nations, but between our growing technological prowess and our evolving ethical and governance maturity. The outcome will define the character of our future society.

Discuss